Synergy

Key Words:

openFrameworks, performance, improv, dance

Project github:

https://github.com/reginaflores/majorstudio1_2014

Video:

Description:

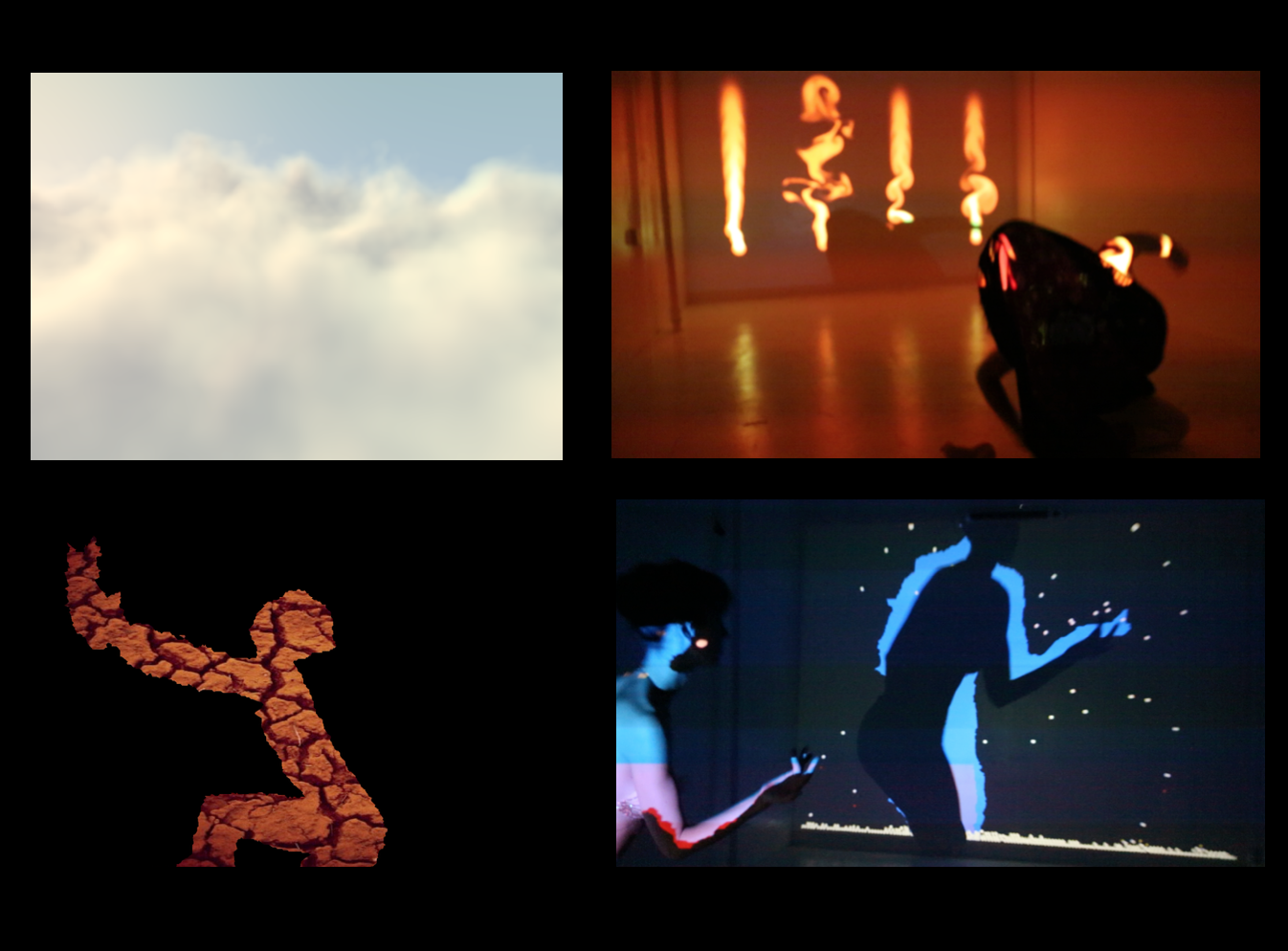

Synergy is a performance piece that sits at the intersection of design, technology, and improvisational-performance. Explorations were made using improvised dance movements, interactions with projected light, and visualizations created in the OpenFrameworks toolkit using a Kinect Sensor. Synergy was filmed in a closed-set at the Alchemical Theatre in New York City. Synergy was enacted by the Synergy Collective, a six-person production team comprised of a creative coder, dancer, DJ, videographer, creative consultant, and a general alchemist. The filmed performance piece was then cut into a 2.5 minute short video and presented as the representation of the work.

This project is an exploration of the human body as a canvas, improvisational movement, and the interactions between the physical and the digital. In what way can computer graphics and the interaction with computerized visuals transform the human body and a blank stage? Through this union of the physical (the stage) and the digital (computer graphics), the physical human form, and the projected human form, we create a synergistic experience—an experience where the whole is greater than the sum of each individual element.

Synergy was achieved using a Kinect Sensor to track motion and OpenFrameworks to create the visual effects. I wrote several OF programs (available on the github link provided above) using an array of add-ons outlined in Figure 3. An Optoma digital projector was used to project the graphics onto the stage and the dancer’s body. The piece was filmed using a Cannon 5D camera.

The majority of the code research and development was made using the ofxKinectProjectorToolKit, ofxBox2D and ofxFluid add-ons. The ofxKinectProjectorToolKit enables users to integrate the Kinect with projected light. Using the Calibration Program, one must calibrate the performance space so that the Kinect will have depth data unique to the particular space. Having calibrated, I was able to build a program that would read the contours of a person’s body moving in the calibrated space. Using this contour data, I built a program that would enable a dancer to interact with the projections. I first used ofxBox2D such that the dancer’s body would be mapped to the edge function and be able to capture falling balls. This is what I used to simulate rain. I then used the ofxFluid to enable to dancer to interact with the fluid motion to simulate fire. I also built an alpha-masking program to produce an effect on my computer screen while the dancer is moving. Using a shader, I “masked” the dancer’s body so that an image is projected as her body. To produce the “earth element” effect, I used an image of cracked-desert earth. For the “air element,” I used a shader (refer to code for full reference documentation) that simulated clouds - this was purely a projection on the stage. I also integrated ofxPostProcessing into one of my programs and included a GUI that I built using ofxUI. The ofxPostProcessing add-on allowed me to create additional effects on my graphics, and using my GUI, I could manipulate these effects on the fly/live while the dancer performed.

The same openFrameworks software was used in a later work called The Second Body. This was a collaboration performance piece with Kristin Slater (kristinslaterbinney.com) and Marcos Berenhauser (mscheidt.com).